Overview

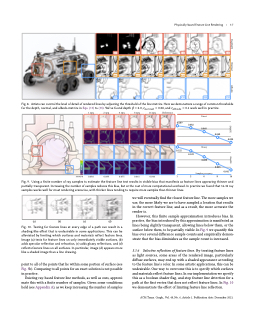

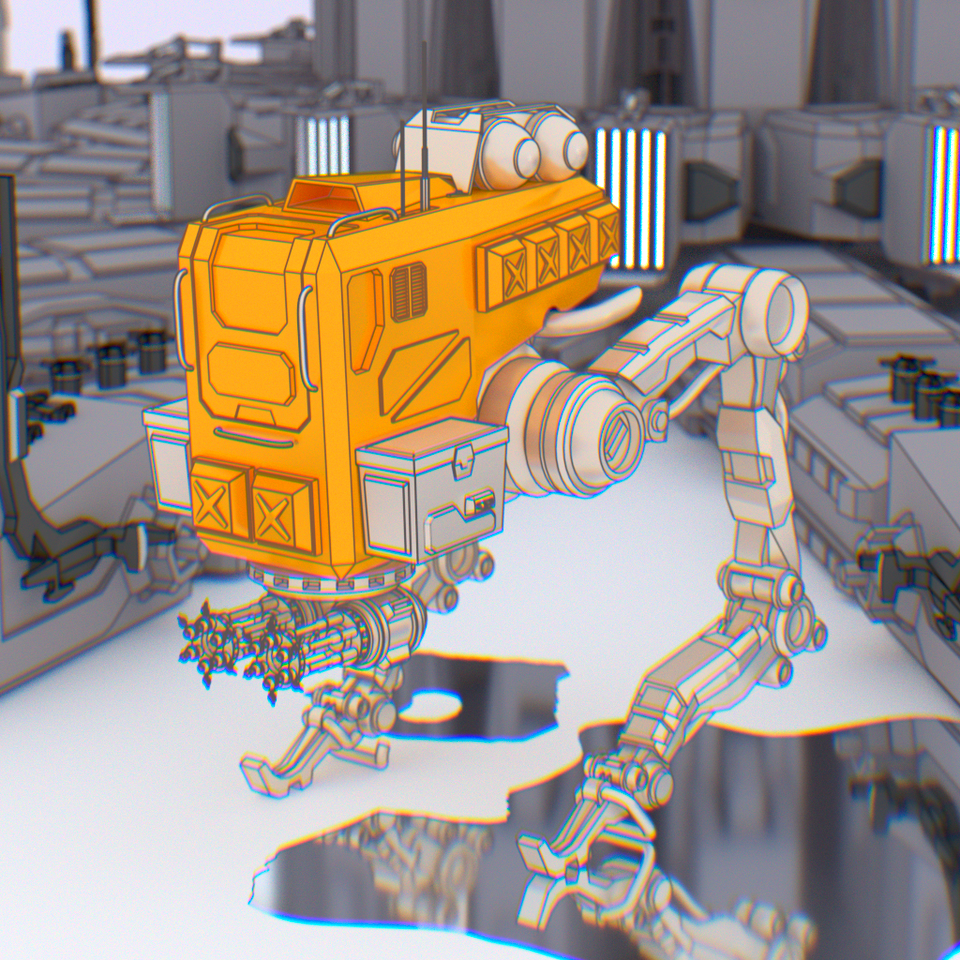

In this paper we present a path-based method for incorporating feature lines into physically-based rendering by modeling them as view-dependent, implicit light sources.

This expands feature line rendering to a wide variety of physical effects, such as lens blur and dispersion, that were previously approximated through screen-space post-processes and compositing. Those effects can now be accurately simulated and seamlessly combined, opening up new avenues of creative expression for artists.

Battle sprinter and UFO models courtesy of Wundersound and DimitrisC

Astreo Type 3 car model courtesy of Jorgeferrer

Alarm clock model courtesy of chams

Abstract

Feature lines visualize the shape and structure of 3D objects, and are an essential component of many non-photorealistic rendering styles. Existing feature line rendering methods, however, are only able to render feature lines in limited contexts, such as on immediately visible surfaces or in specular reflections. We present a novel, path-based method for feature line rendering that allows for the accurate rendering of feature lines in the presence of complex physical phenomena such as glossy reflection, depth-of-field, and dispersion. Our key insight is that feature lines can be modeled as view-dependent light sources. These light sources can be sampled as a part of ordinary paths, and seamlessly integrate into existing physically-based rendering methods. We illustrate the effectiveness of our method in several real-world rendering scenarios with a variety of different physical phenomena.

Presentation Video

The SIGGRAPH Asia 2021 presentation video for the Physically-based Feature Line Rendering paper. It covers a brief introduction to existing 3D line rendering methods, the appeal of ray-based methods, and how a ray-based approach can be integrated into path tracing to render lines in the presence of physical effects like glossy reflections, depth-of-field, and spectral dispersion.

Supplementary Materials

Coming soon.

Authors